5 Practical Techniques to Detect and Mitigate LLM Hallucinations Beyond Prompt Engineering

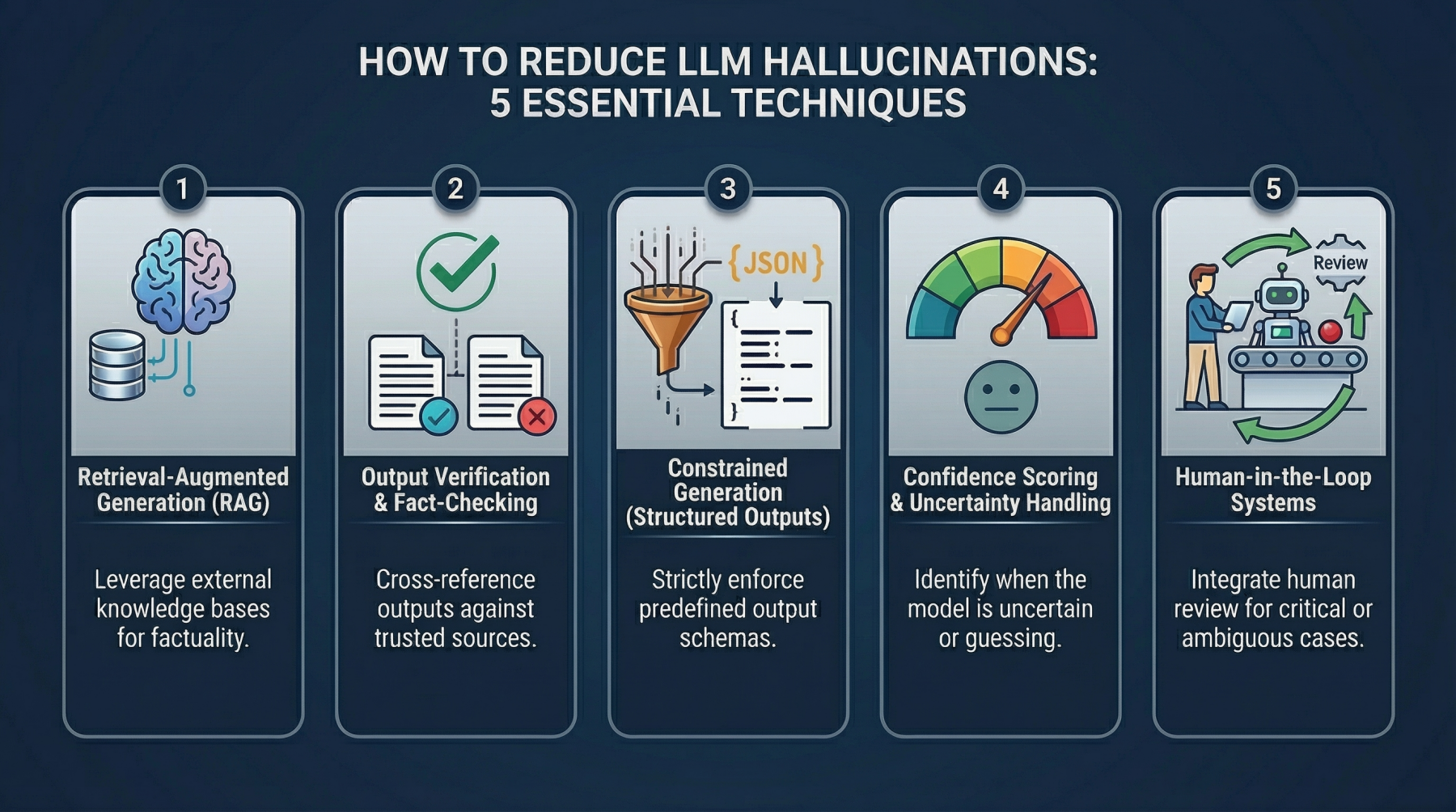

This guide explores why large language models hallucinate and introduces system-level techniques to detect and reduce fabricated outputs in production environments.

You'll learn:

- The root causes of LLM hallucinations

- Five actionable techniques for detecting and mitigating false outputs

- Implementation patterns with practical examples

5 Practical Techniques to Detect and Mitigate LLM Hallucinations Beyond Prompt Engineering

Image by Editor

Introduction

A developer I know asked an LLM to document a payment API. The output looked flawless: clean structure, professional tone, detailed endpoints. One problem. The API didn't exist. The model had fabricated endpoints, parameters, and response formats convincing enough to pass initial review. The error surfaced only during integration, when nothing worked.

That's hallucination in production. The model invents information and presents it confidently, with no indication anything is wrong.

This isn't an edge case. Hallucinations appear across systems in subtle but damaging ways: fabricated citations in research tools, incorrect legal references, nonexistent product features in support responses. Individually, these seem like minor errors. At scale, they erode trust and create real risk.

Early mitigation efforts centered on prompt engineering—clearer instructions, stricter constraints, better phrasing. That helps, but only to a point. Prompts guide the model but don't fundamentally alter how it generates responses. When the system lacks accurate information, it still attempts to produce something plausible.

Teams are now treating hallucination as an architectural challenge, not just a prompting issue. Rather than relying solely on better inputs, they're building validation layers around the model to detect, verify, and control outputs.

What Causes LLM Hallucinations?

Understanding why hallucinations occur helps clarify how to prevent them. The causes aren't obscure, but they're easy to miss when outputs sound authoritative.

First, there's a lack of grounding. Language models don't access real-time or verified data unless explicitly connected to external sources. They generate responses from patterns learned during training, not by fact-checking against live information. When precise answers are unavailable, the model fills gaps with plausible-sounding content.

Overgeneralization compounds the problem. Models trained on vast, diverse datasets learn broad patterns rather than specific truths. Faced with a narrow question, they may synthesize fragments from similar contexts into something that sounds correct but isn't.

There's also inherent pressure to always respond. Language models are optimized for helpfulness and engagement. Rather than admitting uncertainty, they generate the most probable answer they can construct. That's useful for conversation but risky when accuracy is critical.

Technique 1: Retrieval-Augmented Generation (RAG)

The most effective way to reduce hallucinations is straightforward: stop depending solely on what the model learned during training and provide it with verified data when it needs to respond.

That's the core of retrieval-augmented generation (RAG). Instead of asking the model to answer from memory alone, you first retrieve relevant information from an external source, then inject that content as context. The process is simple: a user submits a query, the system searches a knowledge base for related material, and the model generates a response grounded in that retrieved data.

This shifts how the model operates. Without retrieval, it relies on probabilistic patterns, which is where hallucinations originate. With retrieval, it works from concrete information. It's no longer guessing what might be true—it's reasoning from what's been provided.

The distinction between model memory and external knowledge matters. Model memory is static, reflecting training data that may be outdated, incomplete, or too general. External knowledge is dynamic, updatable, and domain-specific. RAG moves the source of truth from the model to your curated data.

In practice, this typically involves a vector database. Documents are converted to embeddings and stored for semantic search. When a query arrives, the system retrieves the most relevant text chunks and includes them in the prompt before generation.

Here's a basic Python example illustrating the flow:

from sentence_transformers import SentenceTransformer

import faiss

import numpy as np

from openai import OpenAI

# Step 1: Load embedding model

embedder = SentenceTransformer("all-MiniLM-L6-v2")

# Step 2: Sample knowledge base

documents = [

"Our refund policy allows returns within 30 days.",

"Shipping takes 3 to 5 business days.",

"You can track your order using the tracking link sent via email."

]

# Step 3: Convert documents to embeddings

doc_embeddings = embedder.encode(documents).astype("float32")

# Step 4: Store embeddings in FAISS index

index = faiss.IndexFlatL2(doc_embeddings.shape[1])

index.add(doc_embeddings)

# Step 5: Query

query = "How long does delivery take?"

query_embedding = embedder.encode([query]).astype("float32")

# Step 6: Retrieve most relevant document

_, indices = index.search(query_embedding, k=1)

retrieved_doc = documents[indices[0][0]]

# Step 7: Generate response using retrieved context

client = OpenAI()

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "system", "content": "Answer using the provided context only."},

{"role": "user", "content": f"Context: {retrieved_doc}\n\nQuestion: {query}"}

]

)

print(response.choices[0].message.content)This implementation demonstrates a complete RAG pipeline in action. The code begins by initializing the all-MiniLM-L6-v2 embedding model, which converts text into numerical vectors. A simple knowledge base containing three customer service documents is then transformed into embeddings and stored in a FAISS index for efficient similarity search.

When a user query arrives—in this case, asking about delivery time—the system encodes it using the same embedding model and searches the FAISS index to find the most semantically similar document. The retrieved context is then passed to GPT-4o-mini, which generates a natural language response grounded in the actual knowledge base content rather than relying solely on the model's training data.